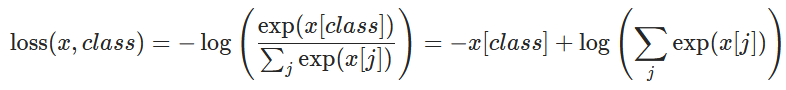

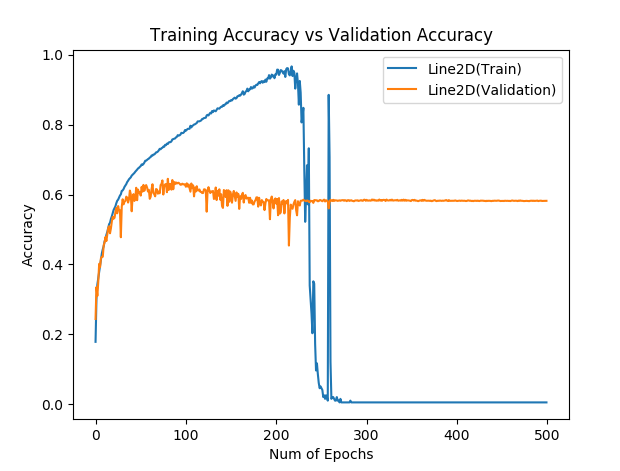

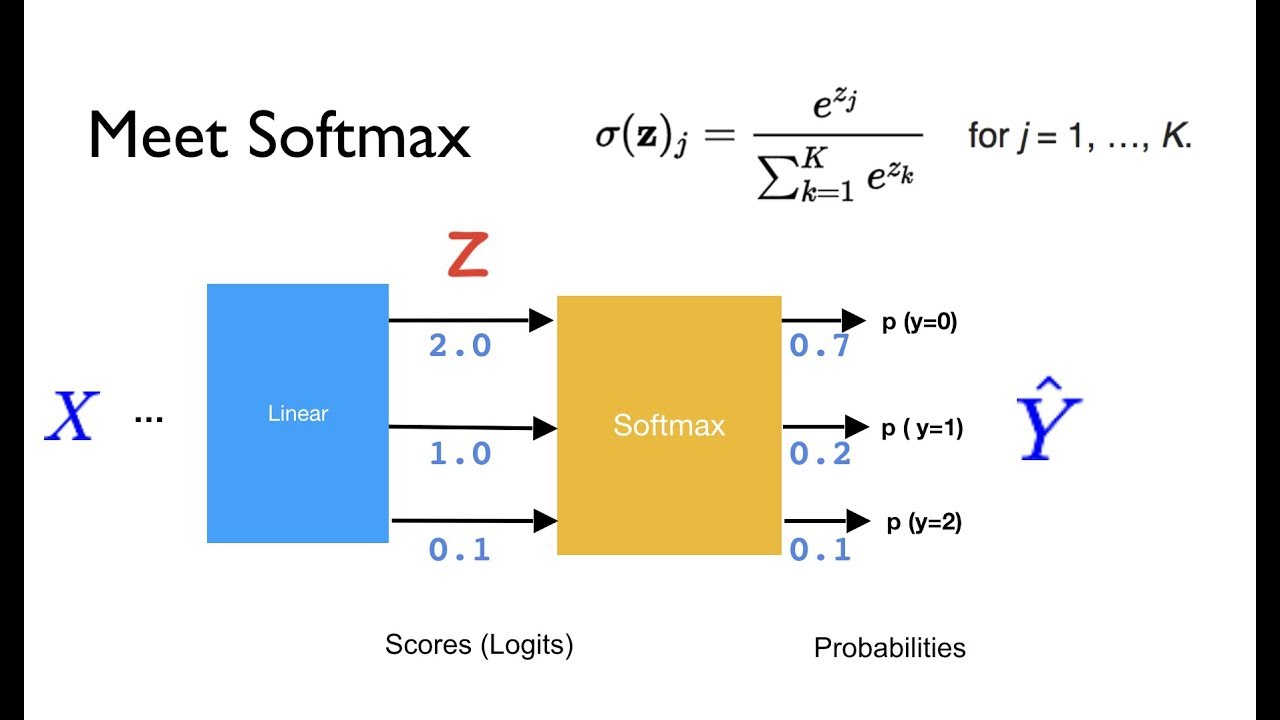

On Wed,, 06:26 Hayat ullah, wrote: Hi, i am working on project related to person re-identification i am trying to re-implement that code of one of the CVPR paper entitled "ABD-Net: Attentive but Diverse Person Re-Identification". Just change the image file and the text data so that the number of images and text corresponds to each other. Reply to this email directly, view it on GitHub You are receiving this because you commented. Inde圎rror: Dimension out of range (expected to be in range of , "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\functional.py", "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\modules\activation.py", Return sum() /Ĭode\torchreid\losses\cross_entropy_loss.py", line 52, inĬode\torchreid\losses\cross_entropy_loss.py", line 32, in apply_loss "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\modules\module.py",įile "F:\Sami ullah work\Attention Code\paper 2_newĬode\torchreid\losses\cross_entropy_loss.py", line 56, in forwardĬode\torchreid\losses\cross_entropy_loss.py", line 52, in _forward Train(epoch, model, criterion, regularizer, optimizer, trainloader, I trained the ABD-Net architecture with resnetĪnd densenet but when i am trying to train the architecture using That code of one of the CVPR paper entitled "ABD-Net: Attentive but Diverse Project related to person re-identification i am trying to re-implement Inde圎rror: Dimension out of range (expected to be in range of, but got 1) Return F.log_softmax(input, self.dim, _stacklevel=5)įile "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\functional.py", line 1350, in log_softmax Return sum() / len(inputs_tuple)įile "F:\hayat ullah work\Attention Code\paper 2_new code\torchreid\losses\cross_entropy_loss.py", line 52, inįile "F:\hayat ullah work\Attention Code\paper 2_new code\torchreid\losses\cross_entropy_loss.py", line 32, in apply_lossįile "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\modules\activation.py", line 1179, in forward

Train(epoch, model, criterion, regularizer, optimizer, trainloader, use_gpu, fixbase=True)įile "C:\Users\Hayat\AppData\Local\Continuum\anaconda3\lib\site-packages\torch\nn\modules\module.py", line 493, in callįile "F:\hayat ullah work\Attention Code\paper 2_new code\torchreid\losses\cross_entropy_loss.py", line 56, in forwardįile "F:\hayatullah work\Attention Code\paper 2_new code\torchreid\losses\cross_entropy_loss.py", line 52, in _forward I trained the ABD-Net architecture with resnet and densenet but when i am trying to train the architecture using shufflenet backbone I face this error. I am trying to re-implement that code of one of the CVPR paper entitled "ABD-Net: Attentive but Diverse Person Re-Identification". Hi, i am working on a project related to person re-identification.

ValueError: Expected 2 or more dimensions (got 1) ~/pytorch/torch/nn/functional.py in nll_loss(input, target, weight, size_average, ignore_index, reduce)ġ337 if dim 1338 raise ValueError('Expected 2 or more dimensions (got )'.format(dim)) ~/pytorch/torch/nn/modules/loss.py in forward(self, input, target)ġ92 return F.nll_loss(input, target, self.weight, self.size_average, > 491 result = self.forward(*input, **kwargs)Ĥ92 for hook in self._forward_hooks.values():Ĥ93 hook_result = hook(self, input, result) ~/pytorch/torch/nn/modules/module.py in _call_(self, *input, **kwargs)Ĥ89 result = self._slow_forward(*input, **kwargs) ValueError Traceback (most recent call last)Ħ target = torch.ones(1, 3, dtype=torch.long) : target = torch.ones(1, 3, dtype=torch.long)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed